💚 At NVIDIA GTC 2026 we presented Robots ID. Where it all began. 🇺🇸

- Humandroid

- Apr 3

- 5 min read

Updated: Apr 4

By Santiago Braña, CTO & Co-founder at Humandroid.

Coming back from San Jose with a strong feeling: we're closer than we think.

GTC 2026 wasn't just a conference. It was a real temperature check on where Physical AI actually stands today and the reading was clear: the ecosystem is aligning faster than anyone anticipated.

Over four intense days, we joined deep-dive sessions on simulation, robotics, and digital twins. We worked inside hands-on labs with Omniverse, Isaac, and NVIDIA's full Physical AI stack. We connected with VCs, researchers, and founders from all over the world. And we shared what we're building at Humandroid.

Beyond the main stage, we joined side events from AWS, Oracle, and others. Different angles, same direction.

But the most valuable part wasn't the content. It was the confirmation.

Jensen Huang's Keynote

GTC stands for GPU Technology Conference NVIDIA's annual event that started in 2009 as a technical gathering for GPU developers and has become, without exaggeration, the Davos of Artificial Intelligence.

For years it was a niche event. Today it fills the SAP Center in San Jose with tens of thousands of people, and Jensen Huang opens it with a keynote the entire industry watches live.

This year the message was unambiguous: Physical AI is the next frontier. Not as a future concept as a reality being built right now. Isaac Sim, GR00T N1.6 , Cosmos, the Jetson stack NVIDIA isn't betting on a single product. They're building the complete infrastructure for robots to learn, simulate, and deploy at scale.

Hearing that live, in that room, knowing what we're already building on top of that same infrastructure — that's one of those moments that confirms you're in the right place.

This is Robots ID and we presented it to the world at NVIDIA GTC 2026 💚

Robots ID didn't start with a product announcement. It started with a question we couldn't stop asking: why is there no operating system that makes humanoid robots actually trainable across different bodies, environments, and tasks?

The answer forced us to build one ourselves.

It began on January 1st, 2025, when we registered as official members of the NVIDIA Developer Program our first formal step inside the ecosystem that would shape everything after. Shortly after, we were accepted into the NVIDIA Inception Program, connecting us to a global ecosystem of innovation in AI and robotics.

But theory only takes you so far. In August 2025, our partner BigDipper official Unitree distributors in Argentina provided us with the first humanoid robot to develop teleoperation and put our technology to the test in the real world. That moment was a turning point: we went from working in simulation to having a physical humanoid in our hands, executing movements, capturing data, and validating every layer of our pipeline.

The first real proof came with TGN Transportadora de Gas del Norte, one of Argentina's most critical energy companies. We built a complete simulation environment in Isaac Sim, integrating Omniverse libraries and collecting data from the Unitree G1 robot through teleoperation. Real data, real environments, real results.

That work didn't go unnoticed. On October 29th, 2025, NVIDIA published a post on its official blog featuring what we were building a signal that the architecture was pointing in the right direction.

On November 13th we made our second visit to NVIDIA's campus to meet with the robotics team and share our latest progress. It was a heavily rainy day navigating puddles between the Voyage and Endeavor buildings but nothing about the weather dampened the energy of that visit.

That day we had the privilege of meeting José Castaños, System SW Principal Engineer and the only Argentine working at NVIDIA. Talking with someone who shares our roots and works at the cutting edge of AI and robotics is one of those moments that's hard to put into words. A special thank you to Enrique Badaraco, who made that connection possible.

That same day we started planning joint initiatives for March 2026 more complex testing and direct collaboration with NVIDIA at every step.

What emerged from all of that is Robots ID: an end-to-end operating system for humanoid robots designed to capture, structure, and scale real-world robot intelligence. It enables humanoid robots to be trained directly from human behavior through teleoperation, motion capture, and multimodal sensing turning physical experience into reusable intelligence.

Instead of relying on a single Vision-Language-Action model, it composes multiple VLA and embodied foundation models based on task complexity, embodiment constraints, and deployment context including NVIDIA GR00T N1.6, Cosmos, UBTECH Thinker, and Unitree's UnifoLM-VLA.

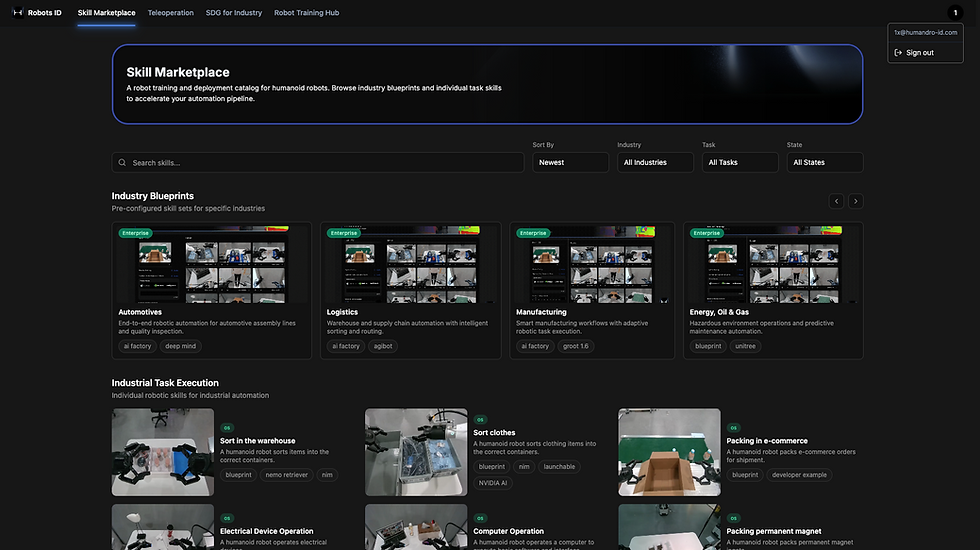

Robots ID is not just a control system. It is a learning engine. Every human demonstration, every teleoperation session, every real-world deployment becomes training data for the next generation of robots feeding a Skills Marketplace where capabilities can be shared, reused, and scaled across fleets, industries, and embodiments.

That's what we brought to NVIDIA GTC 2026. Not a concept. A working system, built from real deployments, validated in the field.

Working alongside NVIDIA's robotics team

Some of the richest moments at GTC don't happen on the main stage. They happen in the labs, in the working sessions, in conversations with the teams building the infrastructure from the inside.

We joined working groups alongside NVIDIA's robotics team people working directly on Isaac, GR00T, and the tools we use every day. Sitting at the table with them, understanding their roadmaps, showing them real operational problems we're solving that's irreplaceable.

And the conclusion of those conversations was always the same:

This is no longer about who has the best model.

The model is the baseline. The real differentiation what will separate the companies that matter from the ones that don't is systems thinking. Who can take a robot, a real environment, and a real operational problem and make them work together reliably? Not in a lab. Not in a demo. In production.

That's a fundamentally different challenge than training a better neural network. It requires hardware integration, safety design, deployment infrastructure, and deep domain knowledge. It requires building for the messiness of the real world, not the cleanliness of a simulation.

That's the game we're playing at Humandroid.

This is what we're building: Robots ID

At Humandroid we're building Robots ID an end-to-end operating system for humanoid robots. Cross-embodiment support. VR teleoperation. Multi-VLA intelligence combining NVIDIA GR00T and Cosmos. A Skills Marketplace for deployable robot capabilities.

The bottleneck for humanoid robotics adoption isn't the hardware. The hardware is ready, or getting there fast. The bottleneck is the software layer that makes robots genuinely usable across different bodies, environments, and tasks.

That's what we're solving. And GTC confirmed we're solving the right problem.

What struck me most walking out of the San Jose Convention Center wasn't any single session or announcement. It was the feeling of being inside something early but not too early. The moment right before the curve steepens.

The infrastructure is here. The talent is organizing. The investment is flowing. The industrial demand is real.

We came back from GTC not just energized, but clearer. The path forward is harder than a demo and more meaningful than a prototype. It's about building systems that work in the real world, at scale, over time.

That's where we're focused. And we're not building it alone.

A special thank you to Edmar Mendizabal, the NVIDIA Inception team, and the entire NVIDIA ecosystem for supporting us and connecting us with the global humanoid robotics community. Your trust and guidance have been a fundamental part of this journey from our first conversations to the GTC stage.

Incredible! 🦾

🇦🇷

💚